Our Method of Changing the Scene Light

Moving to the next part of the process, we faced the issue of changing the lighting in the scene. This step is the most difficult, both computationally and conceptually, as it is not completely clear how to accomplish it correctly. The current step is algorithmically comprehensible, but it concerns a lot of mathematical computations as well as the problem of obtaining a linear color space.

The primary reason is that almost all consumer digital cameras use the sRGB color space, which isn’t linear. But in order to change the scene light correctly, the image needs to be in linear color space. We performed the backward path from sRGB to linear space modeling. Since every camera has a different color image processing pipeline, it was a challenging process but we still succeeded.

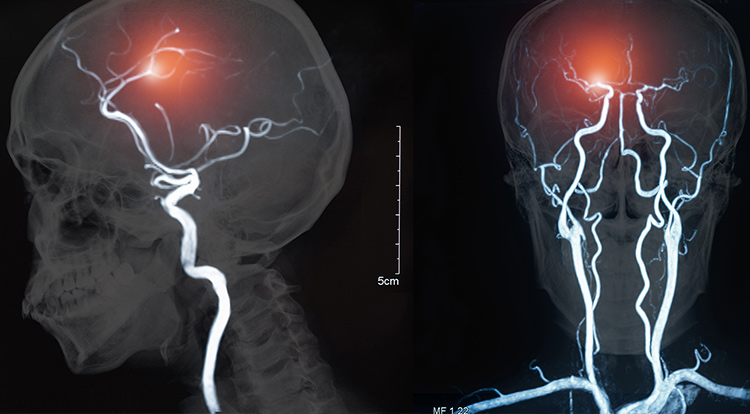

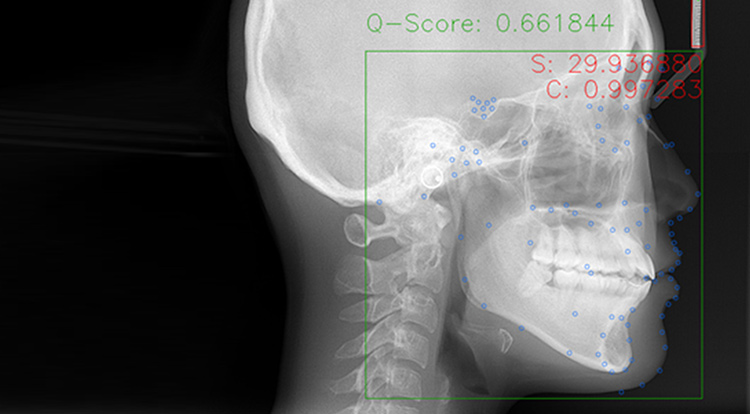

Notably, the entire system consists of only two described implemented blocks. Here are the results from machine vision applications relying on daylight modeling:

We compared the results obtained from our approach to other solutions. The comparison confirmed its top-of-the-line performance out of all known datasets in terms of the general color-constancy metric.

We had also tested our solution on extremely illuminated images and noticed that it could use some improvement. During our research, we discovered the lack of chroma-based features in dataset distribution. To mitigate this problem, we once again extended the existing datasets using a custom augmentation mechanism. We achieved the most robust modeling and inference algorithms currently available.